Opinion: Ontology Logs for the working data scientist

We need a way to speak about what we develop

Data scientists often do a wide range of things beyond training models. They have to communicate with business users on the things they are working on. On top of that, data scientists also have to write production ready code that can be readily handed over to technology teams and deployed. Contrary to what many might think (even many working data scientists), the data scientist’s work does not usually stop with having a “predict” method in their classes. At the end of the day, for an AI model to be useful, it has to be incorporated as part of a broader application/system.

The thing is, the creative process of a data scientist does not usually end up in a place which is easily intelligible to business and technology collaborators. The data scientist is mostly concerned with how well their model works and getting to a point where the efficacy of the model can be demonstrated. In doing so, they might end up with rather clean code in the model training and inference segments but spaghetti code when it comes to interfacing with the rest of the application.

It is important therefore to have a way to communicate the key concepts involved in the application and how it relates to the AI models. One way to do it is via Ontology Logs, or OLogs.

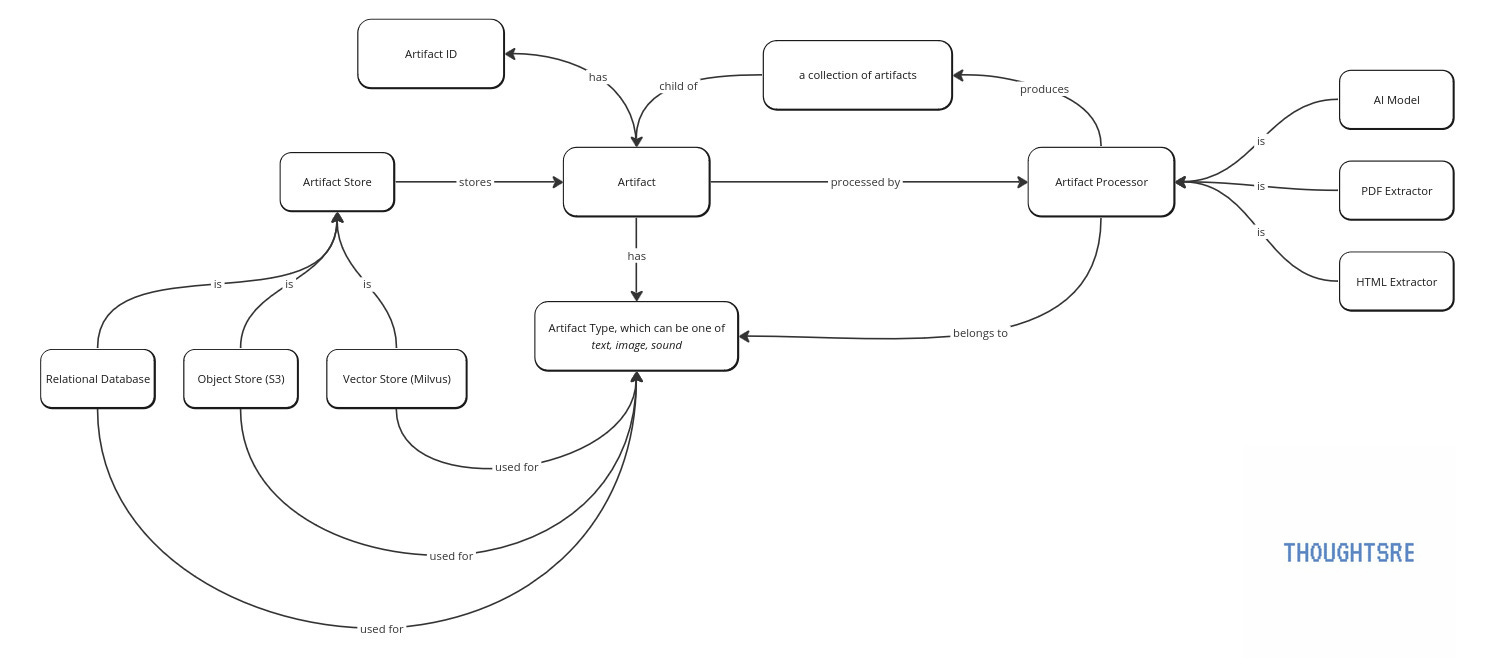

An OLog is a mathematical apparatus that comes from Category Theory and is used as a tool to diagrammatically represent knowledge. Each OLog is a graph where the nodes are objects in the universe of the application (some might think of them as types or classes) and the edges are directed. The drawing of an OLog follows simple rules such as the edges represent a functional relationship between the nodes (i.e. must lead to one and only one node).

Compared to other common diagrams such as the UML diagrams, OLogs can be read out in natural language, as we shall see later, which makes it a great tool for communication between technical and business collaborators.

I cannot stress the last point about being able to be read out in natural language more. So often I’ve seen code devolve into spaghetti, many times happening to myself, because the concepts that exist in an application and how they relate to each other are not made clear up front and we end up writing one-off methods or functions to handle edge cases.

In what follows, I will use an AI-enabled knowledge base ingestion module as an example. The purpose of the module is to ingest content and extract information from the content that will later be searchable. The diagram below shows an OLog for such a knowledge base module. The digram is highly simplified as we are focussed on the OLog.

So let me put the OLog into words for you now…

In this module, we have artifacts. Each artifact belongs to an artifact type which can be either text, image and sound. Artifact stores store the artifact and can be either a relational database, S3 or a vector store. Each artifact store is used to store a particular artifact type. Each artifact can be processed by artifact processors which in turn generate a collection of artifacts that are child artifacts of the parent artifact. At present, the artifact processors available are a PDF extractor, HTML extractor and some “AI model” which does whatever.

Notice what happened. I now have a diagram which I can put into words and is a common platform for business and technical collaborators to discuss and reason about using a unified taxonomy. This is very valuable.

For example, the development team can now know that we need an Artifact class that hsa an ID attribute as well as child artifacts. The data scientist knows that the output of the models they design should be able to be cast into an Artifact object which will have some standard API to interact with it.

Final note. The OLog represents the world view of the designer. It need not be reflecting objective truths. For example, there is no need to sub-type all content in the knowledge base as an Artifact. It is a design choice. But nonetheless, we now have a unified way to speak about the application we are trying to build.